Conference Presentation

Large-Scale Deployment of Mammography AI Demonstrates Robust Categorization and Suggests Benefit of More Granularity Than Binary Triage Categories

RSNA 2022

Dr. Bryan Haslam

November 29, 2022

Purpose

A variety of AI tools are FDA-cleared for screening mammography such as triage (CADt) or computer aided detection (CADe) and diagnosis (CADx) products, but there is little data on large-scale deployments of such AI tools in clinical practice. Although some AI triage products have shown promising results in domains such as intracranial hemorrhage, there are fewer indications of immediate value in triage for mammography, perhaps because of the nature of a screening test where very low prevalence is expected. Here we sought to assess radiologists’ performance by triage category and also more granular AI categories.

Materials and Methods

An FDA-cleared CADt algorithm was deployed at 151 clinical sites across multiple US states. Data was collected from 519,281 screening mammograms interpreted by 223 radiologists over a period of more than 10 months. The data collected for each mammogram included the product outputs (“suspicious” or “not suspicious”), the underlying AI numeric scores, radiologists’ BIRADS scores, and all biopsy outcomes within 6 months. The AI scores were also retrospectively binned into four suspicion levels containing ~25% (“Minimal”), ~50% (“Low”), ~20% (“Intermediate”) and ~5% (“High”) of all mammograms. Cancer detection rate (CDR) and recall rate were assessed for each suspicion level.

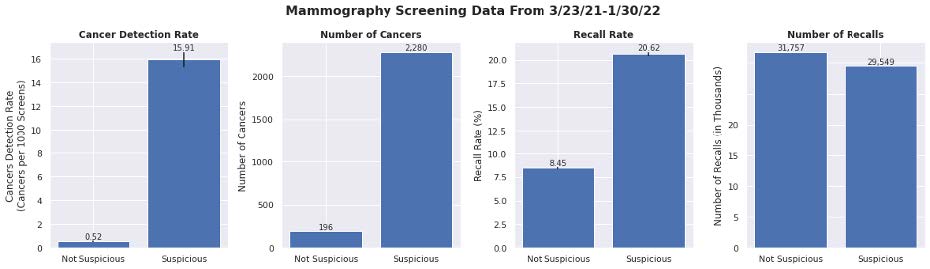

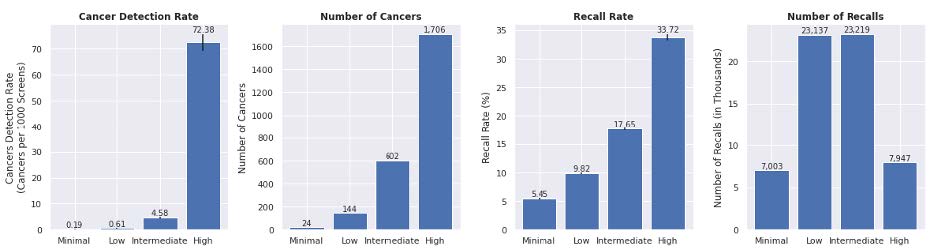

Results

The observed CDR, total cancers detected, recall rate and total patients recalled are reported by suspicion level. The CDR increased exponentially with increasing AI suspicion (0.19, 0.61, 4.58 and 72.38), while the recall rate increased incrementally with AI suspicion (5.45, 9.82, 17.65, 33.72). There was a dramatic difference in CDR between Minimal and High categories (381 times greater) even though those categories had approximately the same number of recalls (7,003 and 7,947). The Low and Intermediate categories showed similar patterns (23,137 and 23,219 recalls), albeit less pronounced (8 times greater).

Conclusion

In a group of more than half a million women, AI was able to reliably categorize patients using four cancer suspicion levels. Given the dramatic difference in performance at the different suspicion levels, radiologists could potentially increase CDR by adjusting their behavior to focus on High and Intermediate cases while also lowering recall rates by up to 50% through reducing recalls on Minimal and Low cases, which only represented 7% of all cancers. The greater granularity provided by four categories will likely aid radiologists significantly more than a simple binary triage flag.

Clinical Relevance

AI for mammography can indicate cancer suspicion reliably to clinicians as supported by large scale clinical data. Changing behavior by suspicion level could lead to quality improvements for patients.

Radiologist performance measures by AI categories in 151 clinical sites (N=519,281 screening mammograms). The top row shows radiologist performance measures across triage binary categories, while the bottom row shows the same metrics across a more granular set of four categories. The difference in performance can be seen more pronounced with four categories.